On this day in history, Kerry Miller (Library Research Support) and Laura Klinkhamer (Edinburgh Open Research Initiative and ReproducibiliTea) delivered a packed programme of speakers, workshops, and poster presentations.

Attendees online and in person were treated to a fine and varied selection of talks. To begin with, topics ranged from Gavin McLachlan’s overview of current national and international political contexts and Dominic Tate’s review of the University’s Open Research Roadmap, to the latest in open access publishing from Rebecca Wojturska and Dominique Walker, FAIR principles from Susanna-Assunta Sansone, and Eugenia Rodrigues on inclusivity and Citizen Science.

Attendees online and in person were treated to a fine and varied selection of talks. To begin with, topics ranged from Gavin McLachlan’s overview of current national and international political contexts and Dominic Tate’s review of the University’s Open Research Roadmap, to the latest in open access publishing from Rebecca Wojturska and Dominique Walker, FAIR principles from Susanna-Assunta Sansone, and Eugenia Rodrigues on inclusivity and Citizen Science.

Other speakers – Malcolm Macleod, Jane Hillston, Alan Cambell, and Stephen Curry – focused on research culture and integrity. Notably, they reminded us that open practices aren’t just essential for replication and verification, they might also help in dealing with all kinds of bad behaviour: bullying, harassment, perhaps even research misconduct. As one would expect, the need to incentivise and reward openness was also a hot topic. Not a bad idea, especially if the aim is to change people’s behaviour for the better.

The session on training and education was particularly interesting, especially the middle two presentations, both of which focused on openness and pedagogic practice. First, Madeleine Pownall presented a synthesis of evidence relating to impact on student outcomes. Her findings suggest that exposure to open practices can improve scientific literacy, critical thinking, and core competencies, including understanding statistics and research methods.

Nicely complementing Madeleine’s study, Emma MacKenzie and Felicity Anderson gave us the benefit of hands-on experience. Speaking from either side of the student-supervisor relation, they described their use of open source tools, materials, and mind-sets in student projects. Here, too, we saw the development of core competencies, this time including the documentation, discussion, and resolution of errors.

Nicely complementing Madeleine’s study, Emma MacKenzie and Felicity Anderson gave us the benefit of hands-on experience. Speaking from either side of the student-supervisor relation, they described their use of open source tools, materials, and mind-sets in student projects. Here, too, we saw the development of core competencies, this time including the documentation, discussion, and resolution of errors.

The lessons from all three presenters are clear enough: make the resources of scholarly research accessible and students will engage with them enthusiastically, intelligently, and with self-awareness. Just imagine what might be achieved should such attitudes ever escape the classroom and reach the wider world.

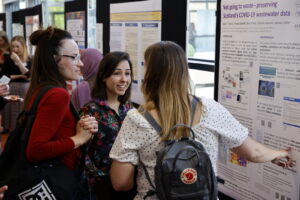

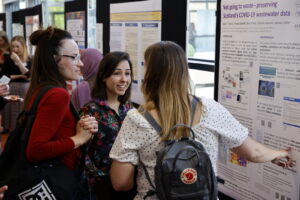

There were also poster sessions, culminating in first prize for Livia Scorza’s ‘Not going to waste – preserving Scotland’s COVID-19 waste water data,’ and there were workshops covering everything from public engagement to Open Research and AI.

There were also poster sessions, culminating in first prize for Livia Scorza’s ‘Not going to waste – preserving Scotland’s COVID-19 waste water data,’ and there were workshops covering everything from public engagement to Open Research and AI.

The event concluded with a well-deserved show of appreciation for our organisers, Kerry and Laura. Meanwhile, everyone agreed that the day had been a lot of fun and educationally valuable. To see the ties between Open Research, Integrity, and Research Culture being drawn ever closer was both fascinating and encouraging; likewise, the enthusiasm for embedding openness in the student experience.

Best of all, however, it was good to be there in person, especially after the last two years. Speaking to real people and seeing others speak in all three available dimensions was really a very pleasant reminder of what it’s like to be a human being.

Simon Smith

Research Data Support Service

Photographs by Eugen Stoica: ES CC-BY 4.0

Attendees online and in person were treated to a fine and varied selection of talks. To begin with, topics ranged from Gavin McLachlan’s overview of current national and international political contexts and Dominic Tate’s review of the University’s Open Research Roadmap, to the latest in open access publishing from Rebecca Wojturska and Dominique Walker, FAIR principles from Susanna-Assunta Sansone, and Eugenia Rodrigues on inclusivity and Citizen Science.

Attendees online and in person were treated to a fine and varied selection of talks. To begin with, topics ranged from Gavin McLachlan’s overview of current national and international political contexts and Dominic Tate’s review of the University’s Open Research Roadmap, to the latest in open access publishing from Rebecca Wojturska and Dominique Walker, FAIR principles from Susanna-Assunta Sansone, and Eugenia Rodrigues on inclusivity and Citizen Science. Nicely complementing Madeleine’s study, Emma MacKenzie and Felicity Anderson gave us the benefit of hands-on experience. Speaking from either side of the student-supervisor relation, they described their use of open source tools, materials, and mind-sets in student projects. Here, too, we saw the development of core competencies, this time including the documentation, discussion, and resolution of errors.

Nicely complementing Madeleine’s study, Emma MacKenzie and Felicity Anderson gave us the benefit of hands-on experience. Speaking from either side of the student-supervisor relation, they described their use of open source tools, materials, and mind-sets in student projects. Here, too, we saw the development of core competencies, this time including the documentation, discussion, and resolution of errors. There were also poster sessions, culminating in first prize for Livia Scorza’s ‘Not going to waste – preserving Scotland’s COVID-19 waste water data,’ and there were workshops covering everything from public engagement to Open Research and AI.

There were also poster sessions, culminating in first prize for Livia Scorza’s ‘Not going to waste – preserving Scotland’s COVID-19 waste water data,’ and there were workshops covering everything from public engagement to Open Research and AI.