A few weeks ago I attended the 9th International Digital Curation Conference in San Francisco. The conference was spread over four days, with two days for workshops, and two for the main conference. The conference was jointly run by the Digital Curation Centre and the California Digital Library. Unsurprisingly it was an excellent conference with much debate and discussion about the evolving needs for digital curation of research data.

The main points I took home from the conference were:

Science is changing: Atul Butte gave an inspiring keynote that contained an overview of the ways in which his own work is changing. In particular he explained how it is now possible to ‘outsource’ parts of the scientific process. The first is the ability to visit a web site to buy tissue samplesfor specific diseases which were previously used for medical tests, but which have now been anonymised and collected rather than being discarded. Secondly it is also now possible to order mouse trials to be undertaken, again via a web site. These allow routine activities to be performed more quickly and cheaply.

Big Data: This phrase is often used and means different things to different people. A nice definition given by Jane Hunter was that curation of big data is hard because of its volume, velocity, variety and veracity. She followed this up by some good examples where data have been effectively used.

Skills need to be taught: There were several sessions about the role of Information Schools in educating a new breed of information professionals with the skills required to effectively handle the growing requirements of analysing and curating data. This growth was demonstrated by how we are seeing many more job titles such as data engineer / analyst / steward / journalist. It was proposed that library degrees should include more technical skills such as programming and data formats.

The Data paper: There was much discussion about the concept of a ‘Data Paper’ – a short journal paper that describes a data set. It was seen as an important element in raising the profile of the creation of re-usable data sets. Such papers would be citable and trackable in the same ways as journal papers, and could therefore contribute to esteem indicators. There was a mix of traditional and new publishers with varying business models for achieving this. One point that stood out for me was that publishers were not proposing to archive the data, only the associated data paper. The archiving would need to take place elsewhere.

Tools are improving: I attended a workshop about Data Management in the Cloud, facilitated by Microsoft Research. They gave a demo of some of the latest features of Excel. Many of the new features seem to nicely fit into two camps, but equally useful and very powerful to both. Whether you are looking at data from the perspective of business intelligence or research data analysis, tools such as Excel are now much more than a spreadsheet for adding up numbers. They can import, manipulate, and display data in many new and powerful ways.

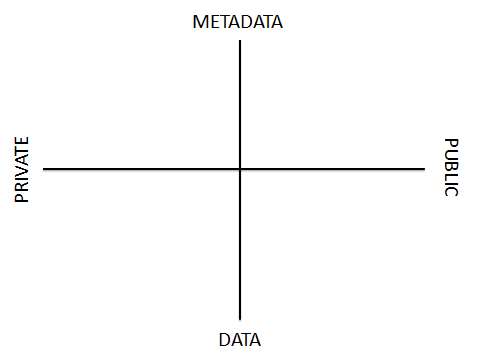

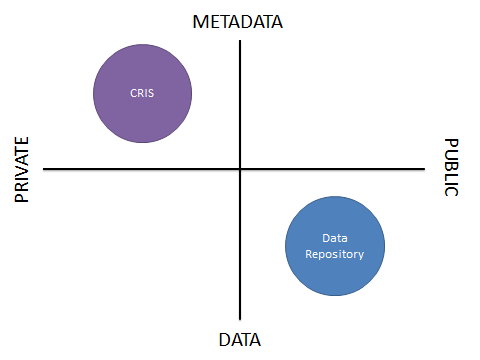

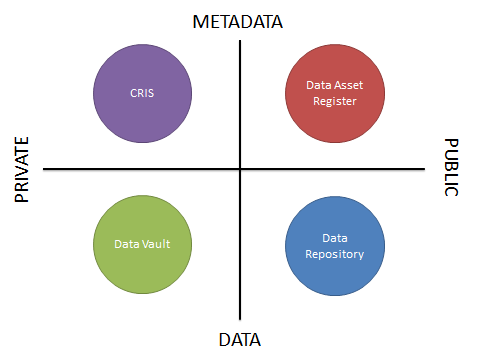

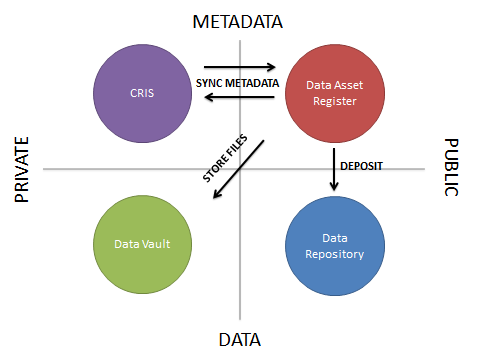

I was also able to present a poster that contains some of the evolving thoughts about data curation systems at the University of Edinburgh: http://dx.doi.org/10.6084/m9.figshare.902835

In his closing reflection of the conference, Clifford Lynch said that we need to understand how much progress we are making with data curation. It will be interesting to see the progress made and what new issues are being discussed at the conference next year which will be held much closer to home in London.

Stuart Lewis

Head of Research and Learning Services

Library & University Collections, Information Services