“The most valuable unstudied source for Scottish history….in existence.” Historiographer Royal, Professor T.C. Smout

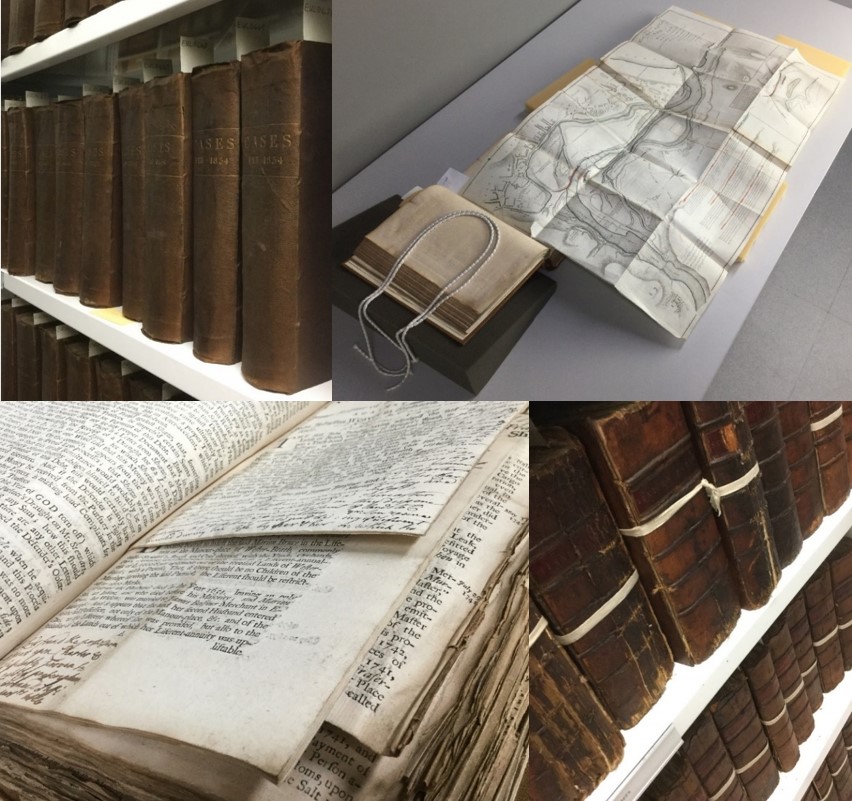

It has been a while since we provided an update on our Scottish Court of Session Papers Digitisation Project after the initial pilot in early 2017 (see previous blog post here). To recap, this project consists of an expansive collection of court records from Scotland’s highest civil court. The collection is held over three institutions here in Edinburgh; The Faculty of Advocates, The Signet Library and the Edinburgh University Library (EUL), with EUL leading the project. There are over 5000 volumes made up of written pleadings of contested cases, answers, replies, and case summaries, many of which have contemporary annotations. It is almost certainly the world’s largest single body of uncatalogued English language printed material before 1900. Many of the volumes are in very poor condition, requiring conservation care, and the volumes often contain large foldouts which present many digitisation challenges.

The aim of this second pilot project is to digitise 300 volumes cover to cover developing and testing workflows as we go. Next, automated item data extraction techniques will gather the key information from the images of the individual cases and documents in order to build up a catalogue record. Put simply, the result would be a searchable catalogue online with individual cases and the constitutive parts, with viewable digital images. Another key strand is that every image will be run through an OCR (Optical Character Recognition) process to provide machine readable text- no small feat from archaic text and machine learning techniques have had to be applied.

Convention suggests that for a large digitisation project such as this, it would be logical to create a catalogue record prior to digitisation, however, this is made almost impossible by the vast amount of uncatalogued material. During the first pilot I quickly learnt that entering catalogue information and metadata manually would be incredibly time consuming. In light of this we were encouraged to explore other avenues for harvesting the data through computational technology.

For this phase we have up-scaled things considerably. We now have two i2S V-Shape scanners and our Project Conservator, Nicole Devereux, has already conserved the 310 volumes needed. We have spent a great deal of time putting together a workflow (and tweaking it!) which combines using both the V-Shape scanners and the PhaseOne IQ3 100MP cameras. The lion’s share of the work will be carried out on the high-speed V-shape scanners, but where there are foldouts and larger volumes, these will be digitised here in the Digital Imaging Unit (DIU).

My colleague Susan Pettigrew and I discussed with Digital Scholarship Developer, Mike Bennet, how we could develop a system which would aid the data extraction process in order to separate documents and cases. We decided to incorporate coloured markers within the image frame which would denote the start (green marker) and end (red marker) of a case, and if a page is a foldout (yellow marker). We then employed our best Blue Peter skills to build a holder for easy insertion of the markers and colour charts which you can view in the images below. The software that Mike has developed can read these colours and record the separation of documents. This system seems to be working so far! The software is also able to auto-crop and auto-deskew the images, as seen below, and it can do this with very large batches of images.

Foldouts are complicated! When scanning a volume we generate a high quality tiff file of each page which is represented by a unique 7 digit ID number. A volume may have more than 1500 pages (yes… that’s going to be a lot of data requiring some hefty processing power). With each page we record the Volume Unique ID, the 7 digit filename and the page sequence number – these are the three essential pieces of metadata that we record at the digitisation stage to glue the project together.

We have a separate spreadsheet for each piece of digitisation equipment. When we come across a foldout we have to record the gap in the sequence, the original is delivered to the DIU for photography of the foldouts and the sequence number is inserted into the metadata for the original. Unfortunately this does require a number of spreadsheets to manage the progression of the project. Furthermore, with no reliable pagination in the volumes when missing pages are discovered at the Quality Assurance stage, re-scans require all the sequence numbers for that volume need to be reconfigured accordingly- complex indeed!

We are beginning to come through the good end of the teething problems with equipment and workflows. Over the next few weeks we should have a better idea of how to measure the quantity of digitisation work that can be achieved over a given period of time. Predictions and projections, however, are proving particularly hard with this project as you never really know the state of play with a volume until you open it and begin digitising.

John Bryden, Assistant Photographer

Be First to Comment